Unsupervised Learning of Multi-Hypothesized Pick-and-Place Task Templates via Crowdsourcing

The objective of this work is to scale up robot learning from demonstration (LfD)

to larger and more complex tasks than currently possible in order to enable robots to move out of highly constrained, repetitive applications, such as manufacturing, and into human-oriented environments with greater autonomy. Even in its simplest form,

pick-and-place is a hard problem due to uncertainty arising

from noisy input demonstrations and non-deterministic real

world environments. This work introduces a novel method

for goal-based learning from demonstration where we learn

over a large corpus of human demonstrated ground truths of

placement locations in an unsupervised manner via Gaussian

Mixture Models. The goal is to provide a multi-hypothesis

solution for a given task description which can later be utilized

in the execution of the task itself. In addition to learning

the actual arrangements of the items in question, we also

autonomously extract which frames of reference are important

in each demonstration. We further verify these findings in a

subsequent evaluation and execution via a mobile manipulator

The objective of this work is to scale up robot learning from demonstration (LfD)

to larger and more complex tasks than currently possible in order to enable robots to move out of highly constrained, repetitive applications, such as manufacturing, and into human-oriented environments with greater autonomy. Even in its simplest form,

pick-and-place is a hard problem due to uncertainty arising

from noisy input demonstrations and non-deterministic real

world environments. This work introduces a novel method

for goal-based learning from demonstration where we learn

over a large corpus of human demonstrated ground truths of

placement locations in an unsupervised manner via Gaussian

Mixture Models. The goal is to provide a multi-hypothesis

solution for a given task description which can later be utilized

in the execution of the task itself. In addition to learning

the actual arrangements of the items in question, we also

autonomously extract which frames of reference are important

in each demonstration. We further verify these findings in a

subsequent evaluation and execution via a mobile manipulator

Interactive Hierarchical Task Learning from a Single Demonstration

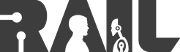

We have developed learning and interaction algorithms to

support a human teaching hierarchical task models to a

robot using a single demonstration in the context of a mixed-initiative interaction with bidirectional communication. In

particular, we have identified and implemented two important heuristics for suggesting task groupings based on the

physical structure of the manipulated artifact and on the

data flow between tasks. We have evaluated our algorithms

with users in a simulated environment and shown both that

the overall approach is usable and that the grouping suggestions significantly improve the learning and interaction.

We have developed learning and interaction algorithms to

support a human teaching hierarchical task models to a

robot using a single demonstration in the context of a mixed-initiative interaction with bidirectional communication. In

particular, we have identified and implemented two important heuristics for suggesting task groupings based on the

physical structure of the manipulated artifact and on the

data flow between tasks. We have evaluated our algorithms

with users in a simulated environment and shown both that

the overall approach is usable and that the grouping suggestions significantly improve the learning and interaction.

- Anahita Mohseni-Kabir, Sonia Chernova, Charles Rich, Candy Sidner, and Daniel Miller. Interactive hierarchical task learning from a single demonstration. In ACM/IEEE International Conference on Human-Robot Interaction (HRI), pages 1–8. ACM/IEEE, 2015.

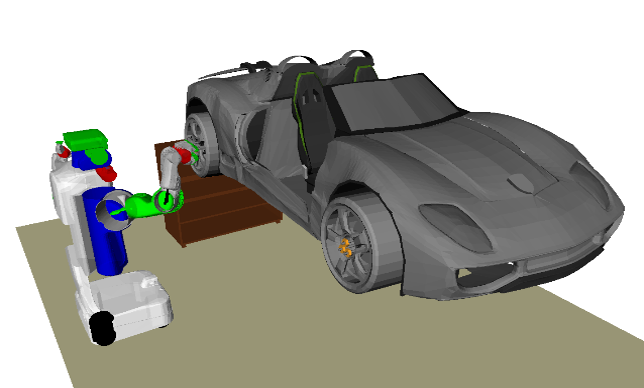

Solving and Explaining Analogy Questions Using Semantic Networks

Analogies are a fundamental human reasoning pattern

that relies on relational similarity. Understanding how

analogies are formed facilitates the transfer of knowledge

between contexts. The approach presented in this

work focuses on obtaining precise interpretations of

analogies. We leverage noisy semantic networks to answer

and explain a wide spectrum of analogy questions.

The core of our contribution, the Semantic Similarity

Engine, consists of methods for extracting and comparing

graph-contexts that reveal the relational parallelism

that analogies are based on, while mitigating uncertainty

in the semantic network.We demonstrate these

methods in two tasks: answering multiple choice analogy

questions and generating human readable analogy

explanations. We evaluate our approach on two datasets

totaling 600 analogy questions. Our results show

reliable performance and low false-positive rate in question

answering; human evaluators agreed with 96% of

our analogy explanations.

Analogies are a fundamental human reasoning pattern

that relies on relational similarity. Understanding how

analogies are formed facilitates the transfer of knowledge

between contexts. The approach presented in this

work focuses on obtaining precise interpretations of

analogies. We leverage noisy semantic networks to answer

and explain a wide spectrum of analogy questions.

The core of our contribution, the Semantic Similarity

Engine, consists of methods for extracting and comparing

graph-contexts that reveal the relational parallelism

that analogies are based on, while mitigating uncertainty

in the semantic network.We demonstrate these

methods in two tasks: answering multiple choice analogy

questions and generating human readable analogy

explanations. We evaluate our approach on two datasets

totaling 600 analogy questions. Our results show

reliable performance and low false-positive rate in question

answering; human evaluators agreed with 96% of

our analogy explanations.

- Adrian Boteanu and Sonia Chernova. Solving and explaining analogy questions using semantic networks. In AAAI Conference on Artificial Intelligence, pages 1–8. AAAI, 2015.

Object Recognition Database for Robotic Grasping

Object recognition and manipulation are critical

for enabling robots to operate in household environments.

Many grasp planners can estimate grasps based on object shape, but they ignore key information

about non-visual object characteristics. Object model

databases can account for this information, but existing

methods for database construction are time and resource

intensive. We present an easy-to-use system for

constructing object models for 3D object recognition

and manipulation made possible by advances in web

robotics. The database consists of point clouds generated

using a novel iterative point cloud registration algorithm.

The system requires no additional equipment

beyond the robot, and non-expert users can demonstrate

grasps through an intuitive web interface. We validated

the system with data collected from both a crowdsourcing

user study and expert demonstration and showed

that the demonstration approach outperforms purely

vision-based grasp planning approaches for a wide variety

of object classes.

Object recognition and manipulation are critical

for enabling robots to operate in household environments.

Many grasp planners can estimate grasps based on object shape, but they ignore key information

about non-visual object characteristics. Object model

databases can account for this information, but existing

methods for database construction are time and resource

intensive. We present an easy-to-use system for

constructing object models for 3D object recognition

and manipulation made possible by advances in web

robotics. The database consists of point clouds generated

using a novel iterative point cloud registration algorithm.

The system requires no additional equipment

beyond the robot, and non-expert users can demonstrate

grasps through an intuitive web interface. We validated

the system with data collected from both a crowdsourcing

user study and expert demonstration and showed

that the demonstration approach outperforms purely

vision-based grasp planning approaches for a wide variety

of object classes.

- David Kent, Morteza Behrooz, and Sonia Chernova. Crowdsourcing the Construction of a 3D Object Recognition Database for Robotic Grasping. In IEEE International Conference on Robotics and Automation (ICRA), 2014.

- David Kent and Sonia Chernova. Construction of an Object Manipulation Database from Grasp Demonstrations. In IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2014.

DARPA Robotics Challenge

The

DARPA Robotics Challenge seeks to develop ground robots capable of

executing complex tasks in dangerous, degraded, human-engineered

environments, particularly in the area of disaster response. The RAIL

research group is part of the 10-university Track A DRC-HUBO team led

by Drexel University. The project is a collaboration with Dmitry

Berenson and Rob Lindeman at WPI. Our work focuses on

user-guided manipulation framework for high degree-of-freedom robots

operating

in environments with limited communication.

The

DARPA Robotics Challenge seeks to develop ground robots capable of

executing complex tasks in dangerous, degraded, human-engineered

environments, particularly in the area of disaster response. The RAIL

research group is part of the 10-university Track A DRC-HUBO team led

by Drexel University. The project is a collaboration with Dmitry

Berenson and Rob Lindeman at WPI. Our work focuses on

user-guided manipulation framework for high degree-of-freedom robots

operating

in environments with limited communication.

- Nicholas Alunni, Calder Phillips-Grafflin, Halit Bener Suay, Jim Mainprice, Daniel Lofaro, Dmitry Berenson, Sonia Chernova, Robert W Lindeman, and Paul Oh. Toward a user-guided manipulation framework for high-DOF robots with limited communication. Journal of Intelligent Service Robotics, Special Issue on Technologies for Practical Robot Applications, 2014.

- Jim Mainprice, Calder Phillips-Grafflin, Halit Bener Suay, Nicholas Alunni, Daniel Lofaro, Dmitry Berenson, Sonia Chernova, Robert W Lindeman, and Paul Oh. From autonomy to cooperative traded control of humanoid manipulation tasks with unreliable communication: System design and lessons learned. In Intelligent Robots and Systems (IROS 2014), 2014 IEEE/RSJ International Conference on, pages 3767–3774. IEEE, 2014.

- Nicholas Alunni, Calder Phillips-Grafflin, Halit Bener Suay, Daniel Lofaro, Dmitry Berenson, Sonia Chernova, Robert W. Lindeman and Paul Oh. Toward A User-Guided Manipulation Framework for High-DOF Robots with Limited Communication, In the IEEE International Conference on Technologies for Practical Robot Applications (TePRA), 2013.

Message Authentication Codes for Secure Remote Non-Native Client Connections to ROS Enabled Robots

Recent work in the robotics community has lead to the emergence of cloud-based solutions and remote clients. Such work allows robots to effectively distribute complex computations across multiple machines, and allows remote clients, both human and automata, to control robots across the globe. With the increasing use and importance of such ideas, it is crucial not to overlook the critical issue of security in these systems. This project demonstrates the use of web tokens for achieving secure authentication for remote, non-native clients in the widely-used Robot Operating System (ROS) middleware. The software is written in a system-independent manner and is available in open source on GitHub.

- Russell Toris, Craig Shue, and Sonia Chernova. Message Authentication Codes for Secure Remote Non-Native Client Connections to ROS Enabled Robots. In IEEE International Conference on Technologies for Practical Robot Applications (TEPRA), April 2014.

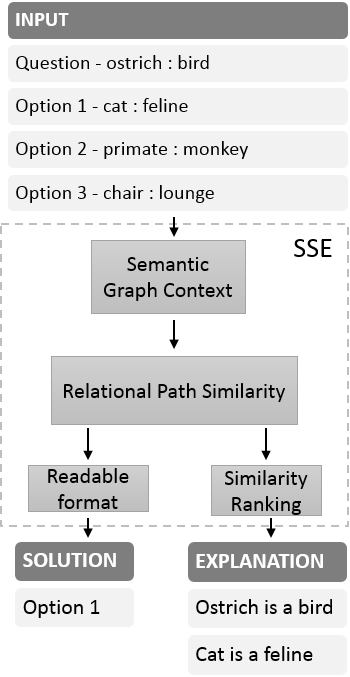

RobotsFor.Me

This

project aims to develop a web-based, platform-independent tool for

conducting large-scale robotics user studies online. Building upon the

open source PR2 Remote

Lab, RobotsFor.Me (http://RobotsFor.me)

enables users to remotely control robots through a common web browser,

enabling researchers to conduct user studies with participants across

the globe. Still under development, the current version of RobotsFor.Me

includes a web interface for robot control, as well as a Robot Management System

for managing users and different study conditions. The complete system

is platform independent, and to date has been tested with the Rovio,

youBot and PR2 platforms in both physical and simulated environments.

RobotsFor.Me is the first robot remote lab designed for use by the

general public.

This

project aims to develop a web-based, platform-independent tool for

conducting large-scale robotics user studies online. Building upon the

open source PR2 Remote

Lab, RobotsFor.Me (http://RobotsFor.me)

enables users to remotely control robots through a common web browser,

enabling researchers to conduct user studies with participants across

the globe. Still under development, the current version of RobotsFor.Me

includes a web interface for robot control, as well as a Robot Management System

for managing users and different study conditions. The complete system

is platform independent, and to date has been tested with the Rovio,

youBot and PR2 platforms in both physical and simulated environments.

RobotsFor.Me is the first robot remote lab designed for use by the

general public.

- Russell Toris, David Kent, Sonia Chernova. "The Robot Management System: A Framework for Conducting Human-Robot Interaction Studies Through Crowdsourcing", Journal on Human-Robot Interaction, 2014.

- National Geographic, "Robot Revolution? Scientists Teach Robots to Learn."

Robot Learning from Demonstration

Robot learning from demonstration (LfD) research focuses on algorithms that enable a robot to learn new task policies from demonstrations performed by a human teacher. See the Survey of Robot Learning from Demonstration for more information on this research area. Our current work includes the first comparative evaluation of leading algorithms in this area and the development of new multi-strategy learning algorithms:

- Halit Bener Suay, Russell Toris and Sonia Chernova. A Practical Comparison of Three Robot Learning from Demonstration Algorithms. International Journal of Social Robotics, special issue on Learning from Demonstration, Volume 4, Issue 4, Page 319-330, 2012.

- Osentoski S., Pitzer B., Crick C., Graylin J., Dong S., Grollman D., Suay H.B., and Jenkins O.C. Remote Robotic Laboratories for Learning from Demonstration. In International Journal of Social Robotics, special issue on Learning from Demonstration, Volume 4, Issue 4, Page 449-461, 2012.

- Halit Bener Suay, Joseph Beck, Sonia Chernova. Using Causal Models for Learning from Demonstration. AAAI Fall Symposium on Robots Learning Interactively from Human Teachers, 2012.

- Halit Bener Suay and Sonia Chernova. A Comparison of Two Algorithms for Robot Learning from Demonstration. In the IEEE International Conference on Systems, Man, and Cybernetics, 2011.

- Halit Bener Suay and Sonia Chernova. Effect of the Human Guidance and State Space Size on Interactive Reinforcement Learning. In the IEEE International Symposium on Robot and Human Interactive Communication (Ro-Man), 2011.

Cloud Primer: Leveraging Common Sense Computing for Early Childhood Literacy

Providing young children with opportunities to develop early literacy skills is important to their success in school, their success in learning to read, and their success in life. This project focuses on the creation of a new interactive reading primer technology on tablet computers that will foster early literacy skills and shared parent-child reading through the use of a targeted discussion-topic suggestion system aimed at the adult participant. The Cloud Primer will crowdsource the interactions and discussions of parent-child dyads across a community of readers. It will then leverage this information in combination with a common sense knowledge base to develop computational models of the interactions. These models will then be used to provide context-sensitive discussion topic suggestions to parents during the shared reading activity with young children.

- Adrian Boteanu and Sonia Chernova. Modeling Discussion Topics in Interactions with a Tablet Reading Primer. International Conference on Intelligent User Interfaces, 2013.

- Adrian Boteanu and Sonia Chernova. Modeling Topics in User Dialog for Interactive Tablet Media. Workshop on Human Computation in Digital Entertainment at the Eighth Annual AAAI Conference on Artificial Intelligence and Interactive Digital Entertainment, 2012.

Human-Agent Transfer

Human-Agent Transfer (HAT) is a policy learning technique that combines transfer learning, learning from demonstration and reinforcement learning to achieve rapid learning and high performance in complex domains. Using this technique we can effectively transfer knowledge from a human to an agent, even when they have different perceptions of state.

- Matthew Taylor, Halit Bener Suay and Sonia Chernova. Integrating Reinforcement Learning with Human Demonstrations of Varying Ability. In the International Conference on Autonomous Agents and Multi-Agent Systems (AAMAS), Taipei, Taiwan, 2011.

- Matthew E. Taylor, Halit Bener Suay and Sonia Chernova. Using Human Demonstrations to Improve Reinforcement Learning. In the AAAI 2011 Spring Symposium: Help Me Help You: Bridging the Gaps in Human-Agent Collaboration, Palo Alto, CA, 2011.

RoboCup Autonomous Robot Soccer

RoboCup

is an international competition that aims to promote AI and robotics

research through the development of autonomous soccer playing robots.

In 2010 and 2011 WPI competed in the Standard Platform League,

which requires all teams to use the Aldebaran Nao

robots. The robots are not remote controlled in any way; they observe

the world through two head-mounted cameras and use this information to

recognize objects in the environment and their own location on the

field. Robots communicate with each other using the wireless network

and use on-board processing to decide which actions to take. Here

is an article describing the event and WPI Warriors team.

RoboCup

is an international competition that aims to promote AI and robotics

research through the development of autonomous soccer playing robots.

In 2010 and 2011 WPI competed in the Standard Platform League,

which requires all teams to use the Aldebaran Nao

robots. The robots are not remote controlled in any way; they observe

the world through two head-mounted cameras and use this information to

recognize objects in the environment and their own location on the

field. Robots communicate with each other using the wireless network

and use on-board processing to decide which actions to take. Here

is an article describing the event and WPI Warriors team.

Open Source Kinect Interface for Humanoid Robot Control

The ROS Nao-OpenNI package provides gesture-based control for humanoid robots using the Microsoft Kinect sensor. The video on the right shows the code being used to control an Aldebaran Nao.